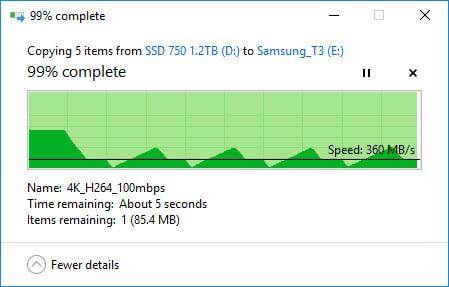

Windows' built-in copy function works well enough for small files. Problems begin when the transfer involves tens or hundreds of gigabytes or thousands of files. At that point, File Explorer often slows to a crawl, stops at “calculating time remaining”, or partially fails with little clarity on what actually got copied.

The issue is not about storage speed. This is how File Explorer handles large transfers. Before starting the copy, Windows tries to enumerate each file and estimate the total size and time. On large directories, this pre-calculation alone can take minutes or more, and estimates remain unreliable throughout the process.

Error handling is another weak point. If a file is locked or unreadable, Explorer often pauses the entire operation and waits for user input. In some cases, the transfer stops, leaving the partially copied directory with no built-in way to verify what succeeded. Resume support exists, but re-verification is slow and inefficient, especially on external or network drives.

Explorer also believes that success equals integrity. It does not verify the copied data with a checksum. For backups, archives or large media files, this means that silent corruption may go unnoticed until the file is later opened.

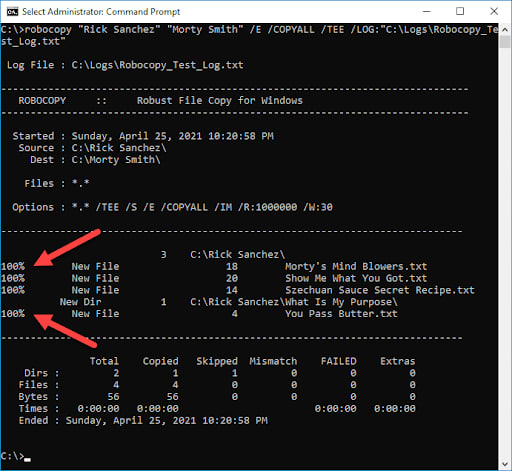

For large or critical transfers, command-line tools are more reliable. Windows included fought (Strong File Copy), designed for bulk data movement and directory mirroring. It avoids GUI overhead, supports retries, logs every action, and can cleanly resume interrupted transfers.

RoboCopy also allows multithreaded copying, making it far more efficient when dealing with thousands of small files. There are options to control the number of retries, wait time, and behavior when files are locked. For backup or migration, it can mirror directories exactly, reducing human error.

A simple example looks like this:

Robocopy D:Source E:Backup /MIR /R:3 /W:5 /MT:8

It mirrors one folder to another, retries failed files three times, waits five seconds between retries, and uses multiple threads.

Robocopying is not risk-free. An incorrect flag, especially with mirroring, can delete data at the destination. Users should test the command on non-critical folders first and read the output log carefully.

For occasional small transfers, File Explorer is fine. For professionals, content creators, or anyone who regularly moves large datasets, relying solely on Explorer is leading to delays and uncertainty. Command-line tools trade convenience for control, but they complete transfers predictably and leave an audit trail if something goes wrong.

Thanks for being a Ghax reader. The post Why Windows File Copy struggles with large files, and what works better appeared first on gHacks Technology News.